Webpage "harvester" for saving a batch of websites

-

Include some "crawler" / "harvester" to make possible saving a batch of websites (for example to PDF).

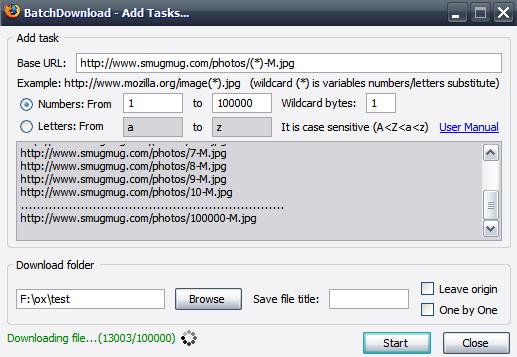

- by typing URL with %s that will be replaced by loop counter (letter / number)

- by recursion (aka "save this page and all pages that are linked on this page") - with editable recursion limit

Batch functionality was for example a part of Adobe Acrobat:

Or other tools:

-

Thank you for your request. As this post is over 4 years old and has received few votes, it is now going to be archived.

-

L LonM moved this topic from Desktop Feature Requests on

L LonM moved this topic from Desktop Feature Requests on